TLDR;

This lecture introduces convolutional neural networks (CNNs), focusing on image representation, the limitations of fully connected neural networks for image processing, data augmentation techniques, and data storage methods for CNN models. Key points include:

- Understanding image representation in terms of pixels and channels (RGB, grayscale, binary).

- Recognizing the spatial relationship and local dependencies between pixels, which are lost in fully connected networks.

- Applying data augmentation techniques to increase the size and diversity of training datasets, improving model generalization and reducing overfitting.

- Storing image data in a structured directory format for use with the

flow_from_directoryfunction in Keras.

Introduction to CNNs [0:00]

The lecture series transitions to convolutional neural networks (CNNs) after covering the fundamentals of neural networks, including single and multi-layer perceptrons, activation functions, optimizers, and regularization techniques. Weeks three and four will focus on CNNs, covering image representation, CNN architecture, regularization techniques, and implementation using Keras. Week four will explore CNN variants like AlexNet, VGGNet, GoogleNet, ResNet, Inception, and transfer learning, including implementing these models with and without transfer learning, and building ensemble models.

Fundamentals of Image Representation [2:40]

The lecture covers the fundamentals of image representation, including RGB (color), grayscale, and binary images. It explains that a system reads an image as a grid of pixels, each represented by a numerical value indicating intensity. The dimensions of an image are defined by the number of pixels along its height and width, forming a matrix of pixel values. For grayscale images, pixel values define brightness, while for RGB images, they define color intensity.

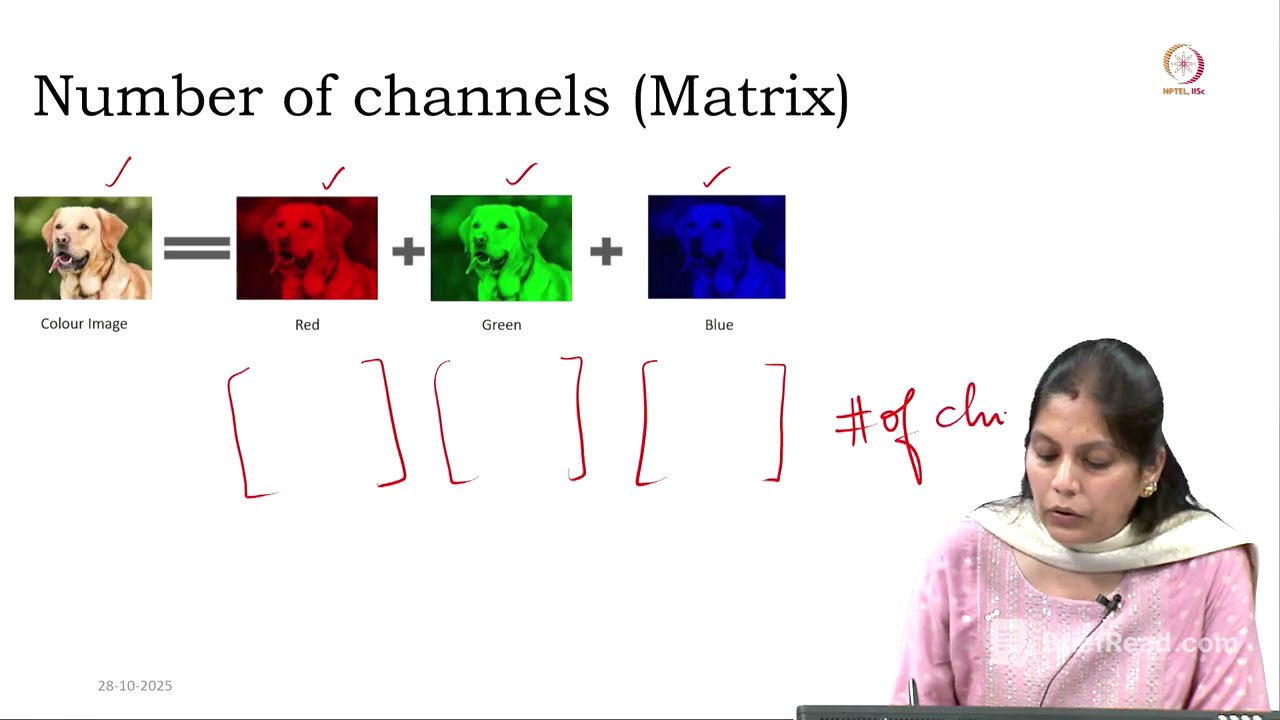

Number of Channels for Image Types [7:36]

The lecture details the number of channels for different image types. Color images (RGB) have three channels, representing the red, green, and blue components. Each pixel is a combination of three values, one for each color component. Grayscale images have one channel, with values ranging from 0 to 255, representing different shades of gray. Binary images also have one channel, with pixel values of either 0 or 1, representing black or white.

Limitations of Fully Connected Neural Networks for Images [13:17]

The lecture explains why multi-layer perceptrons (MLPs) are not suitable for image processing. Images are represented as matrices with rows, columns, and channels. Using an MLP requires flattening this matrix into a single vector, which destroys the spatial relationship between pixels. This spatial relationship includes how pixels are positioned and related to each other, and its loss means the local dependencies, such as edges and corners, are also lost. Additionally, MLPs treat each pixel as an independent feature, ignoring the correlation between neighboring pixels. The high dimensionality of images leads to an explosion in the number of parameters, making the model complex and prone to overfitting.

Data Augmentation Techniques [27:26]

The lecture introduces data augmentation as a technique to artificially increase the size and diversity of the training dataset by modifying existing data. This helps the model generalize better and reduces overfitting. The first step in data augmentation is rescaling, where pixel values are normalized by dividing them by 255 to bring them between 0 and 1, avoiding unintentional bias. Keras provides an ImageDataGenerator for this purpose, with parameters like rescale, shear_range (slanting the image), zoom_range, horizontal_flip, and vertical_flip.

Data Augmentation Parameters in Detail [41:15]

The lecture continues discussing data augmentation parameters, including width_shift_range and height_shift_range, which shift the image horizontally and vertically, respectively. The amount of shift is determined by a fraction of the image's width or height. When shifting the image, empty spaces are filled using the fill_mode parameter, which can be set to nearest (repeats nearest pixel values), constant (fills with a constant value, typically 0), wrap (uses data that has come out of the image), or reflect (uses mirror reflections). Rotation is also discussed, where the image is rotated by a specified degree. Data augmentation is applied only to the training dataset, not the test dataset.

Data Augmentation Process and Data Storage for CNN Models [52:36]

The lecture explains the data augmentation process with an example, detailing how different transformations are applied to each image in a training dataset. It also covers how to store data for CNN models, recommending a folder structure with subfolders for training and testing data, and further subfolders for class labels (e.g., COVID and non-COVID). The flow_from_directory function in Keras is used to read images from this directory structure, automatically labeling them based on the subfolder names. Parameters for flow_from_directory include the path to the directory, target_size (to resize images to a consistent size), batch_size, and class_mode (binary or categorical).